Energy Symbiosis: Powering Compute for AI & Bitcoin Simultaneously

Speakers/Moderators

Jesse

Jesse

David Chernis

David Chernis

Rick Margerison

Rick Margerison

Rick’s focus is on the “energy symbiosis” between Bitcoin mining and AI. By architecting modular, liquid-cooled infrastructure, he enables high-density clusters to operate as flexible loads for the grid while solving the complex electrical handoffs required for parallel compute. A prolific inventor and contributor to the Open Compute Project (OCP) since 2017, Rick has also served as a lead for the ITE and Immersion Requirements communities. He brings a hardware-first perspective to the stage, demonstrating how specialized power distribution and shared infrastructure can turn the competition for energy into a collaborative, efficient model for global compute

Session

Overview

Energy Symbiosis: Powering Compute for AI & Bitcoin Simultaneously brought together David Chernis of CPower, Rick Margerison, and Billy Boone of Simple Mining, hosted by Jesse of BlocksBridge, to discuss whether Bitcoin mining and AI/HPC workloads can share power infrastructure or whether they will increasingly compete for the same sites.

The panel contrasted Bitcoin mining’s low-cost, flexible energy profile with AI data centers’ higher capital intensity, greater uptime requirements, and ability to pay more for power. The speakers discussed how miners may be displaced from prime grid-connected locations, while still playing a role in energy arbitrage, demand response, curtailment, and seeding early-stage power projects.

Key themes included ERCOT flexibility requirements, ancillary services, battery storage, rack-level AI workload management, liquid cooling, immersion infrastructure, and vertical integration around land, permits, transmission, and power purchase agreements. The discussion also explored how lessons from Bitcoin mining could influence AI factory design, especially in cooling, modular infrastructure, and energy-aware operations.

The session framed Bitcoin miners as sophisticated energy operators whose flexibility may remain valuable even as AI demand grows. It also highlighted the likelihood that mining hardware moves toward cheaper, more interruptible power sources, while high-value AI workloads claim more constrained grid-connected capacity.

It's great to be here at Bitcoin Conference. I'm excited for this panel. We have a lot of great minds here on stage, and I'm excited to talk about this topic. My name is Jesse. I work at BlocksBridge. We advise public bitcoin miners and HPC companies in the space.

I wanted to start with just a brief introduction of my fellow panelists. Who are you, what do you do, and what's a fun fact about yourself? We'll start with you, Bill.

Yeah, for sure. Thank you, Jesse. My name is Billy Boone. I'm with Simple Mining. We help investors get exposure to bitcoin mining. We have ten data centers in Iowa. We're really good at running computers and building facilities to run computers in Iowa. Interesting fact about myself: I've had a lot of people come up to me and say, “Wow, you're way taller than I thought you would be,” because all these digital people I see online. I've actually won a dunk contest before, so it helps to be tall for stuff like that.

I'm Rick, and my background is HPC and artificial intelligence. Back in the day, starting back in 2010, I helped develop products for Nvidia and other companies. For the last ten years, I've been doing nothing but liquid cooling, consulting for different companies and helping bring different types of products to market. Fun fact about myself: I'm also a musician, and have been for most of my life. I'm a drummer.

I'm David Chernis, director of flexible compute platforms at CPower. I got really involved with the mining sector in 2018 as they started showing up in Niagara Falls and Buffalo. From a grid reliability standpoint, we realized these are the greatest demand response assets the grid has ever had, because they can curtail in minutes to seconds when power is constrained. So we're really excited for this moment and this discussion here. Fun fact: people say I'm actually shorter than they expect me to be. And I too am a musician, so we have all of that in common.

Getting to the topic of this panel, anybody who hasn't been living under a rock has been able to see that there has been quite a trend in this past year or so. Bitcoin miners are slowly, or not so slowly, transitioning into the HPC space. There's obviously massive demand coming from all of these new computational demands, Anthropic, what have you. A lot of people ask: are these two worlds, these two computational loads, actually compatible? Are they competing for the same power? Is there a way for them to work together, or is one going to displace the other? The three panelists to my left have a take on this and can talk a little bit about it. Maybe we can start with you, Billy. Are they compatible? Can they live together and coexist?

I would say that they generally cannot live together because they're competing for the same thing. The common denominator between an AI company, a neo cloud, and a Bitcoin miner is that they need physical space. They need land to host their equipment for physical operations, and they need some sort of power source. That's the common denominator between the two.

But it starts to deviate directly from there in terms of cost. The cost to build an AI facility is ten times the cost of running a Bitcoin mining facility. They're much less CapEx sensitive. For example, Bitcoin miners can afford maybe $40 a megawatt hour, whereas an AI company can afford $80 to $200 a megawatt hour. They're much less cost sensitive.

Ultimately, what we're seeing on our end from companies that want to invest in training and inference, and in training models, is that there's an unlimited amount of demand. The issue is not the demand. The issue is the physical constraints, like getting a transformer ordered from China and getting delayed for six months, or making sure you get the right permits or approvals from the city council. Those are the issues. Ultimately they're going to be able to outbid. At the end of the day, miners will probably end up getting pushed away from those places where they get outbid by AI companies and these neo clouds.

There are definitely valid points there. Some of the larger miners out there are starting to move toward the AI space. The problem with that is that their investors are already expecting their returns. They're expecting a certain amount of return, their ROI, and the amount of time that it takes. In my opinion, they're not going to be able to turn those miners off immediately. They're going to have to make that payment and make that CapEx.

You're saying the miners are not going to be able to turn off immediately?

No, because people are expecting their returns. The investment for the companies that are actually providing that funding for those mining companies, unless you just personally invested into those miners and you sell them off, means there's going to need to be a little bit of shared space for a while until you've satisfied people and you're not getting sued.

It will come down to how fast they can liquidate it. If you liquidate your entire fleet and you have a buyer for it, then you have your investors who funded your Bitcoin mining operation. But if you can get funding from someone else, you can pay more money to reconvert it to an AI site, and they're willing to help you liquidate your fleet and get a clean cut so you can start putting GPUs in there, then that's where the difference will come in. I think we're already starting to see that with public companies that are making a complete 180-degree pivot.

I wanted to talk a little bit about the coexistence of AI, HPC, and Bitcoin mining from a grid perspective. David has a lot of thoughts in that regard. You do a lot of that work when it comes to the relationship between data centers, Bitcoin mines, and the grid. How are you thinking about these two different types of loads when it comes to ERCOT and other power markets, and the grid monetization opportunities that are there?

Just to echo the sentiments of Rick and Billy, certainly the publicly traded miners who have a fiduciary duty for profitability are making a hard pivot to AI hosting. The smaller and midsize miners are taking a slower, more measured approach. We are seeing more of a load shift, but still as a hybrid load: mining and then just starting to add some GPU architecture there.

From a grid standpoint, if you look at Senate Bill 6 in ERCOT, which mandates all large loads over 75 megawatts to be flexible, flexibility on the mining side is actually really valuable. If you're trying to get more capacity for the AI side of your house, when the utility and the ISO can look at the flexibility lever, that does have value. Then there are all of the demand response revenues.

If we look at my state, New York, and I mentioned this in an earlier panel, you have access to ancillary services now with the day-ahead and real-time markets. You can actually sell your megawatts back in near real time when it's above your profitability strike price to mine, and in some cases drive your blended cost per megawatt for the month into negative territory in the most expensive peak months.

We're thinking about those kinds of models: having exposure to ancillary, day-ahead, and real-time markets, with fully automated response in seconds, even being able to do regulation as the future state of an AI factory when we have all of the flexibility levers that we're going to talk about later. So far, what we're seeing on our side is the value of demand response for the flexibility side on the mining side is keeping mining on these hybrid sites for now.

I'd like to double click on that for a second. Being at the Bitcoin Conference, I'm sure a lot of people are familiar with the nature of Bitcoin as a very flexible load that has been able to be very responsive, in particular in Texas but elsewhere as well. Most people think AI is much less flexible than it might actually be. I know you have thoughts in that regard. You have this concept of walls of batteries surrounding AI facilities. You have some thoughts on inference. Maybe you can share a little bit more about that and the flexibility of AI in the future.

My favorite topic. We've been preparing for this convergence of these two loads for two or three years, I guess since ChatGPT took off. The way we're envisioning flexibility for AI factories, there are three different flexibility levers.

First, utility power. You have all of the transient peaks, very spiky loads that UPS systems used to be able to handle. Now we're thinking about supercapacitors, solid-state capacitors, and battery storage acting as load smoothing, like a UPS effect.

Then you have unstable utility power. In PJM, people are complaining about unstable power. It's not pure enough to run a cheese-making plant, and that is not going to be stable enough for your GPU architecture. So reason two for storage is power quality from the utility.

Reason three is regulation. SB 6 in ERCOT requires some kind of flexibility for 50 hours a year, or maybe less. Storage gives you that availability to deploy at scale. Our partners can bring hundreds of megawatts of storage to a site quickly. Then for the value of energy arbitrage, ERCOT is thinking about AI factories, miners, and data centers as power platforms, and even as future-state utilities, with their own utility-scale storage and bring-your-own generation.

We can also flex at the rack. You've probably seen software companies like Emerald AI, Lancium, Supermicro, and others that can actually flex a GPU cluster running inference loads at scale without stopping the workload and while maintaining the SLA, the quality of service. So outside with the storage, and inside at the rack, basically like hash rate optimization for large inference clusters.

We have one of the true pioneers and innovators in the cooling space, and I'd love to hear Rick talk a little bit about flexibility and direct liquid cooling.

That was going to be my next question for Rick. In the cooling space, and generally speaking in the buildout phase of these data centers, can you share some thoughts on how you can build out these sites with this specific topic in mind, and how to adapt specifically to AI or Bitcoin mining, or to have optionality?

This is a deep topic, so I'm just going to do a flyby on it. The differences in trying to power these things, as has been made a point, are massively more expensive. True power, redundant power, redundant data, battery backup, generation, all those different things that really make a true AI data center. If you start putting mining equipment inside of there, how do you make that money back? If you're in hard times, scraping by, and not making the money you want to be making, or you're in the negative, having that massive amount of money that you're spending because you have to have twice as much power, and both have to be lit up at the same time, it really wrecks the whole concept of making money in Bitcoin.

Making things more efficient and smarter inside of the data center, and going from high-voltage DC directly to the system so you're not doing conversions and creating losses, makes a huge difference. Using liquid cooling is a massive move in the right direction. Removing your water usage and all the things that actually cost money, that take the dollar out of your pocket, really starts to hit home.

The real line of sight is knowing this is how you really make money. It's dotting the i's and crossing the t's of the planning, and knowing what you're doing from start to finish to make sure that at the end of the day you're actually making the money you expect to make. You're not losing money, and you're not running hotter.

As an example, a lot of people are still running the old J Pros. If you go from a 20 degree Celsius inlet temperature all the way to a 45 degree inlet temperature, if you're running in Texas, you think you're getting whatever it is, 28 to 32 watts per hash, but you're not. As soon as you go above that 25 degree Celsius, that watt per hash really starts to drastically change. You're not really making the money you think you're making. You have to take into account everything: the proper cooling, the proper conversions, that sort of stuff. It really does make a huge difference.

One thing that I'll jump in on is the way that I would look at it, and how miners will come into play, is related to the KPMG report that was published in 2024. It was Bitcoin Mining as an ESG Imperative. The thesis there was that Bitcoin miners incentivize renewable energy buildout because they don't have to have 99.98% uptime like a lot of these AI servers. They can be much more intermittent and much more flexible.

When you go to a place where there's just water flowing off a cliff and you have no idea if that project would make any sense, you can almost run a pilot project with Bitcoin miners to build a small-scale level of infrastructure to see if it even makes sense. Does this even work? That's where Bitcoin miners thrive because the cost of power is essentially zero. From there, that can grow into potentially a much bigger site that eventually an AI company can take over because the infrastructure is already there. They have the networking there. They have all the components there that would make it a Tier 4 or Tier 5 data center. But it has to start somewhere. It's almost seeding a site that may not be anything at this point in time, but eventually can grow into a bigger one.

I love that you mentioned that KPMG report. I'm not going to call him out specifically, but one of the people who worked on that is sitting right here in the audience.

I wanted to go back for a second to Rick and ask: what do you think people building out AI and people involved in HPC development are not learning from Bitcoin mining that they should be learning when it comes to buildout, cooling, and building racks? What lessons learned do you think are missing there? I think you also have some thoughts when it comes to specialization of hardware.

The reality is that Bitcoin miners made liquid cooling in the data center a reality. We were actually the pioneers in the space. Liquid cooling has been around forever, but it wasn't until very recently, in the last two or two and a half years, that you see Dell starting to offer liquid cooling, HP, and everybody else now doing liquid cooling. There have been these little specialty places I used to work for in the past, but it's only in the last two years that it has really come into play. It's only because all the Bitcoin miners are finally going, “Yeah, I can make this happen. I can make it a reality.” They're proving it. We have our failures and we have our wins and we have everything in between, but we've actually made it a reality for the AI side of things.

Having the ability to mix and match some of the Bitcoin miners with HPC GPUs matters. Teleflex has a nice tank over there that you can take a look at. They have the WhatsMiner product in there, and they have the AI side of things in a single tank. If you're going to buy this infrastructure, make sure that infrastructure is flexible. Make sure it's modular so that when you're making those changes, if you're already running Bitcoin miners right now and you're trying to get into the AI space, buy the right product. Get something that allows you to flex and move into where you want to be, not today, not tomorrow, but next year or the year after that. Make the right purchase right up front. Sometimes it costs a little bit more up front, but in the long run, if you're playing the long game, that's where it counts.

Billy, I believe Neil Young at some point said, “My, my, hey, hey, Bitcoin mining is here to stay,” and I tend to agree with that. Can you speak a little bit about where you see the future of Bitcoin miners, being on this frontier industry, and possibly the owners of infrastructure, those who actually control the land and have the transmission and the permits?

To your previous question, the one thing that AI companies could learn from Bitcoin mining companies would be vertical integration: owning the land and the power, and having control over the power purchase agreement that your operation is running on. There is no shortage of mining companies that were running in a jurisdiction that wasn't favorable, or they didn't have complete vertical integration or control over their power purchase agreement, and it blows up their operation because their entire business is energy arbitrage. Your input is buying electricity and converting it into Bitcoin. There has to be a spread there. If there's not a spread, or if your power purchase agreement changes, or if the government imposes some sort of exorbitant tax, then that blows up your entire operation.

I wanted to use these last few minutes to hear from all of you a little bit more about what you see happening in the next couple of years when it comes to Bitcoin mining and HPC integration. You all have your own specialties. Billy, maybe you can talk a little bit more about hash rate, difficulty, and all of that.

I've written ad nauseam about how I think difficulty eventually has to face some headwinds. There have to be some headwinds on Bitmain coming out with another machine that's going to be a 30% efficiency improvement. They are closing in on zero. The reality is, at this point in time, you can't convert zero watts or zero joules into some sort of terahash.

Go back to 2016 or 2017, you're starting around 150 joules per terahash. That was what a mining machine was able to do: take in 150 joules and turn it into a trillion hashes. Now you have something like the S23, which is around 9.5 joules per terahash. That's significant improvement, and it didn't just go straight there. It was more linear. But now they might go from 9.5 to maybe 5. It's going to be much harder when you're getting into quantum tunneling and making chips at these sizes. Working on two-nanometer chips is the competitive chip right now. As you start to get smaller and smaller, it's going to be harder to make significant improvements like it was in the past.

That should be a headwind on difficulty. For those who don't know, difficulty is by far the variable that plays the biggest role in hash price, which is the revenue that miners can expect per unit of hash.

Then you also have the headwind of competition from AI companies. Before, there weren't these massive hyperscalers and neo clouds that wanted gigawatts of power. They want the same thing miners want, so they're going to be able to outbid us unless Bitcoin goes to $1 million a coin in a very short period of time. If they outbid us, where do the miners go? They're keeping the land and the power, and they're going to put GPUs there. So where do the machines go? They'll go to the Permian Basin where electricity prices are negative. They'll go to hydroelectric dams where you can get two to three cents per kilowatt hour. They'll go to places that have stable curtailment power purchase agreements, that are demand responders, that are strategic for utility providers in the local community. It all goes back to power.

Inside the actual data center, Rick, what do the next few years look like in terms of efficiency, racks, and cooling?

Making these chips smaller and smaller, making them more efficient, more effective, getting more terahash for less wattage, is going to keep happening. But the technology is changing.

From the AI side specifically, as an example, you have the Nvidia chips, which are really fantastic. The B100, 200, 300, and all the stuff that's coming out is fantastic. They're super fast. If you want to do inference, training, or whatever you want to do, it does it and it does a fantastic job, but at a price. You're talking about a $500,000 to $750,000 server.

There are different ways of getting to that same spot where you're making those tokens at the end that are worth the dollar, same as hashes. As an example, if my mom were picking up the kids for ice cream and going grocery shopping, she'd come in her vehicle, pick them up, go get ice cream, pick up two weeks of groceries, put it in the back of a minivan or whatever it is, drop us off, and leave. Then she goes to NASCAR. She gets into a race, jumps in that vehicle, and wins NASCAR with the same vehicle. This woman is tireless. She then jumps on a plane with that same vehicle, ships it over there because she's going to Dubai, wins the trophy truck race with the exact same vehicle, and then the next day comes and picks up the kids again and goes grocery shopping again.

That would be one expensive vehicle. That would be one of the most expensive vehicles you could ever think of. That is what Nvidia has done, and it's a great chip. It's a very expensive chip. Running inference, running Llama as an example, there are specific tasks, specific things in the driver, specific things in the firmware, and specific things in the way it communicates on the interconnect, on the motherboard, and everything else that occurs. That's all going to change.

If one vehicle were the best vehicle to do everything all the time, then Ford or Chevy would only have one vehicle. It doesn't work like that because there are specific tasks that this vehicle is good for, and that vehicle is good for something else. I believe that is coming. Instead of having these dedicated chips where it's one size fits all, I believe that is coming in the very near future. It is something I am working on now. The increase in Nvidia competition is what matters: massive, massive increase.

David, final words. If you can talk a little bit about the next few years on your end when it comes to grid and flexibility, and if you also want to talk a little bit about the recent announcement you had in February with a few other companies, that would be great.

I get excited about this topic. Look at all the innovation that the other panelists have pointed at: direct liquid cooling, immersion miners, the wildcatting spirit of innovation on the mining side. That will continue to move to center on AI factory development and design because miners understand energy in a very sophisticated way.

You have traditional data center operators, your colos, who are really real estate investment trusts. They never had to know about energy. They pass through energy to their tenants. This is a major advantage, in my opinion. As we meet in the middle with AI, miners have a clear innovation advantage. I'm really excited to see what's going to happen between now and 2030, because we have to get through to then until we can build more power plants.

Flexibility and demand response as a design feature, and energy arbitrage and demand response, give you freedom of location. Demand response gives you that four-cent power in various markets where it exists, including New York, which people tend to avoid, and sometimes negative energy pricing. The understanding of energy arbitrage and flexibility, bringing the spirit of innovation to center with AI factory design, is what I'm really excited to see. I think we're going to solve this capacity problem that way with this group of people.

I love the optimism. Thank you. Thank you, everybody, for sharing your thoughts, and thank you to the audience for being here. Thank you very much.

Similar

Sessions

Strategic Mining: Embracing Energy Volatility as the Competitive Advantage

John Paul Baric

John Paul Baric

A recognized expert in large power loads and grid strategy, John Paul consults for major enterprises and has served as an expert witness on the siting of large data center loads. He has deep experience in the MISO and SPP power markets, including bilateral settlement agreements for electricity, and has helped utilities design market-based rates and Demand Response programs that align data centers with grid reliability and economics.

An entrepreneur since age 15, when he launched a robotics camp, John Paul has gone on to build three businesses, including Aurum, originally founded in 2016 as MiningStore. He has been involved in Bitcoin mining since the asset traded near $70 per coin and now advises Fortune 1000 companies entering the mining and digital infrastructure space. Combining energy-market fluency with hands-on development experience, John Paul is helping define the next generation of modular, energy-efficient data centers in the United States.

Scooter Womack

Scooter Womack

Behind the scenes, Scooter has been instrumental in launching multiple market participants and driving innovation across the ERCOT landscape. His work has enabled organizations to optimize energy usage, reduce costs, and implement sustainable solutions that align with evolving market dynamics.

With a proven track record of success, Scooter combines deep industry knowledge with strategic foresight to deliver measurable results for clients. His leadership continues to influence the future of energy flexibility and resilience in Texas.

David Chernis

David Chernis

Strategic Mining: Embracing Energy Volatility as the Competitive Advantage

Speakers/Moderators

John Paul Baric

John Paul Baric

A recognized expert in large power loads and grid strategy, John Paul consults for major enterprises and has served as an expert witness on the siting of large data center loads. He has deep experience in the MISO and SPP power markets, including bilateral settlement agreements for electricity, and has helped utilities design market-based rates and Demand Response programs that align data centers with grid reliability and economics.

An entrepreneur since age 15, when he launched a robotics camp, John Paul has gone on to build three businesses, including Aurum, originally founded in 2016 as MiningStore. He has been involved in Bitcoin mining since the asset traded near $70 per coin and now advises Fortune 1000 companies entering the mining and digital infrastructure space. Combining energy-market fluency with hands-on development experience, John Paul is helping define the next generation of modular, energy-efficient data centers in the United States.

Scooter Womack

Scooter Womack

Behind the scenes, Scooter has been instrumental in launching multiple market participants and driving innovation across the ERCOT landscape. His work has enabled organizations to optimize energy usage, reduce costs, and implement sustainable solutions that align with evolving market dynamics.

With a proven track record of success, Scooter combines deep industry knowledge with strategic foresight to deliver measurable results for clients. His leadership continues to influence the future of energy flexibility and resilience in Texas.

David Chernis

David Chernis

Energy Symbiosis: Powering Compute for AI & Bitcoin Simultaneously

Jesse

Jesse

David Chernis

David Chernis

Rick Margerison

Rick Margerison

Rick’s focus is on the “energy symbiosis” between Bitcoin mining and AI. By architecting modular, liquid-cooled infrastructure, he enables high-density clusters to operate as flexible loads for the grid while solving the complex electrical handoffs required for parallel compute. A prolific inventor and contributor to the Open Compute Project (OCP) since 2017, Rick has also served as a lead for the ITE and Immersion Requirements communities. He brings a hardware-first perspective to the stage, demonstrating how specialized power distribution and shared infrastructure can turn the competition for energy into a collaborative, efficient model for global compute

Energy Symbiosis: Powering Compute for AI & Bitcoin Simultaneously

Speakers/Moderators

Jesse

Jesse

David Chernis

David Chernis

Rick Margerison

Rick Margerison

Rick’s focus is on the “energy symbiosis” between Bitcoin mining and AI. By architecting modular, liquid-cooled infrastructure, he enables high-density clusters to operate as flexible loads for the grid while solving the complex electrical handoffs required for parallel compute. A prolific inventor and contributor to the Open Compute Project (OCP) since 2017, Rick has also served as a lead for the ITE and Immersion Requirements communities. He brings a hardware-first perspective to the stage, demonstrating how specialized power distribution and shared infrastructure can turn the competition for energy into a collaborative, efficient model for global compute

Other

Speakers

Michael Saylor

Michael Saylor

Todd Blanche

Todd Blanche

Biography of Deputy Attorney General Todd Blanche

The Honorable Todd Blanche is the 40th Deputy Attorney General of the United States, overseeing the work of the 115,000 dedicated employees who fulfill the Department of Justice’s mission at Main Justice, the FBI, DEA, U.S. Marshals, ATF, and 93 U.S. Attorney’s Offices.

Todd began his career at the Department where he served for over fifteen years in a variety of capacities, including as a contractor, a paralegal in the Criminal Division, and at the United States Attorney’s office for the Southern District of New York where he eventually became an AUSA and later a supervisor.

After leaving the Department, Todd worked as a criminal defense attorney that included representing President Donald Trump in three of the criminal cases brought against him in 2023 and 2024.

Following President Trump’s historic return to the White House, the President appointed Todd to work alongside Attorney General Pam Bondi to make America safe again. At the DOJ, Todd is working tirelessly to implement President Trump’s priorities that include confronting illegal protecting American businesses from fraud.

Todd has been married to his wonderful wife Kristine for nearly thirty years, is a father and grandfather.

Paul Atkins

Paul Atkins

Prior to returning to the SEC, Chairman Atkins was most recently chief executive of Patomak Global Partners, a company he founded in 2009. Chairman Atkins helped lead efforts to develop best practices for the digital asset sector. He served as an independent director and non-executive chairman of the board of BATS Global Markets, Inc. from 2012 to 2015.

Chairman Atkins was appointed by President George W. Bush to serve as a Commissioner of the SEC from 2002 to 2008. During his tenure, he advocated for transparency, consistency, and the use of cost-benefit analysis at the agency. Chairman Atkins also represented the SEC at meetings of the President’s Working Group on Financial Markets and the U.S.-EU Transatlantic Economic Council. From 2009 to 2010, he was appointed a member of the Congressional Oversight Panel for the Troubled Asset Relief Program.

Before serving as an SEC Commissioner, Chairman Atkins was a consultant on securities and investment management industry matters, especially regarding issues of strategy, regulatory compliance, risk management, new product development, and organizational control.

From 1990 to 1994, Chairman Atkins served on the staff of two chairmen of the SEC, Richard C. Breeden and Arthur Levitt, ultimately as chief of staff and counselor, respectively. He received the SEC’s 1992 Law and Policy Award for work regarding corporate governance matters.

Chairman Atkins began his career as a lawyer in New York, focusing on a wide range of corporate transactions for U.S. and foreign clients, including public and private securities offerings and mergers and acquisitions. He was resident for 2½ years in his firm's Paris office and admitted as conseil juridique in France.

A member of the New York and Florida bars, Chairman Atkins received his J.D. from Vanderbilt University School of Law in 1983 and was Senior Student Writing Editor of the Vanderbilt Law Review. He received his A.B., Phi Beta Kappa, from Wofford College in 1980.

Originally from Lillington, North Carolina, Chairman Atkins grew up in Tampa, Florida. He and his wife Sarah have three sons.

Mike Selig

Mike Selig

Chairman Selig brings to the role deep public and private sector experience working with a wide range of stakeholders across agriculture, energy, financial, and digital asset industries, which rely upon and operate in CFTC-regulated markets.

Prior to his leadership at the CFTC, Chairman Selig most recently served as chief counsel of the Securities and Exchange Commission’s Crypto Task Force and senior advisor to SEC Chairman Paul S. Atkins. In this role, Chairman Selig helped to develop a clear regulatory framework for digital asset securities markets, harmonize the SEC and CFTC regulatory regimes, modernize the agency’s rules to reflect new and emerging technologies, and put an end to regulation by enforcement. He also participated in the President’s Working Group on Digital Asset Markets and contributed to its report on “Strengthening American Leadership in Digital Financial Technology.”

Prior to government service, Chairman Selig was a partner at an international law firm, focusing on derivatives and securities regulatory matters. During his years in private practice, he represented a broad range of clients subject to regulation by the CFTC, including commercial end users, futures commission merchants, commodity trading advisors, swap dealers, designated contract markets, derivatives clearing organizations, and digital asset firms. Chairman Selig advised clients on compliance with the Commodity Exchange Act and the CFTC’s rules and regulations thereunder, including in connection with registration applications and obligations, enforcement matters, and complex transactions.

Chairman Selig earned his law degree from The George Washington University Law School and was articles editor of The George Washington Law Review. He received his undergraduate degree from Florida State University.

David Bailey

David Bailey

Eric Trump

Eric Trump

Mr. Trump also serves as Executive Vice President of The Trump Organization, where he oversees the global management and operations of the Trump family’s extensive real estate portfolio. This includes Trump Hotels, Trump Golf, commercial and residential real estate, Trump Estates, and Trump Winery. Known for his hands-on leadership and strong market instincts, he has played a key role in expanding the company’s presence across major U.S. and international markets.

A globally recognized business leader and public figure, Mr. Trump is a prominent advocate for Bitcoin and decentralized finance. He is a co-founder of World Liberty Financial, a decentralized finance (DeFi) platform, and serves on the Board of Advisors of Metaplanet, Japan’s largest corporate holder of Bitcoin.

Beyond his business activities, Mr. Trump has helped raise more than $50 million for St. Jude Children’s Research Hospital in the fight against pediatric cancer, a philanthropic mission he began at age 21.

Mr. Trump earned a degree in Finance and Management from Georgetown University. He currently resides in Florida with his wife, Lara, and their two children. He is also the author of Under Siege, his memoir published in October 2025.

Jack Mallers

Jack Mallers

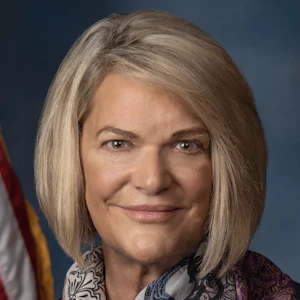

Cynthia Lummis

Cynthia Lummis

As the first-ever Chair of the Senate Banking Subcommittee on Digital Assets, Senator Lummis is the architect of the legislative framework shaping America's digital asset future. She introduced the landmark Lummis-Gillibrand Responsible Financial Innovation Act, the first comprehensive bipartisan crypto regulatory framework in Senate history. She co-authored the GENIUS Act — the first federal stablecoin law ever enacted — and introduced the BITCOIN Act, which would establish a U.S. strategic Bitcoin reserve of up to one million BTC. She is leading the Clarity Act, which will bring long-overdue regulatory certainty to the digital asset industry. She has also championed digital asset tax reform, including a de minimis exemption for small transactions and equal tax treatment for miners and stakers.

Known as Congress' "Crypto Queen," Senator Lummis represents Wyoming — a state she has helped build into one of the most digital asset-friendly regulatory environments in the nation. Before serving in the Senate, she served 14 years in the Wyoming Legislature, eight years as Wyoming State Treasurer, and eight years in the U.S. House. She is a three-time graduate of the University of Wyoming.

Her work represents a crucial bridge between traditional financial systems and the emerging digital economy, ensuring America leads the world in financial innovation while protecting the individual freedoms that define it.

Adam Back

Adam Back

Amy Oldenburg

Amy Oldenburg

David Marcus

David Marcus

Matt Schultz

Matt Schultz

Fred Thiel

Fred Thiel

Throughout his career, Mr. Thiel has consistently driven rapid growth and created substantial shareholder value. Prior to MARA, Mr. Thiel served as the CEO of two other public companies, Local Corporation (NASDAQ: LOCM) and Lantronix, Inc (NASDAQ: LTRX). He has successfully raised billions in equity and debt through private and public offerings, led companies through IPOs, executed high-value exits to strategic and financial acquirers, and implemented effective M&A and roll-up strategies.

Mr. Thiel attended the Stockholm School of Economics and executive classes at Harvard Business School, and is fluent in English, Spanish, Swedish, and French. Mr. Thiel is the Chairman of the Board for Oden Technology, Inc. and is active in Young Presidents’ Organization where he has led initiatives in both the FinTech and Technology Networks.

A recognized voice in the industry, Fred frequently shares his insights on energy and technology with major media outlets like Bloomberg TV, CNBC, and FOX Business, contributing to vital discussions about the future of these sectors.

Tim Draper

Tim Draper

He is a supporter and global thought leader for entrepreneurs everywhere, and is a leading spokesperson for Bitcoin and decentralization, having won the Bitcoin US Marshall’s auction in 2014, invested in over 50 crypto companies, and led investments in Coinbase, Ledger, Tezos, and Bancor, among others.

Afroman